Music technology necromancy: the curious case of the return of modular synthesizers.

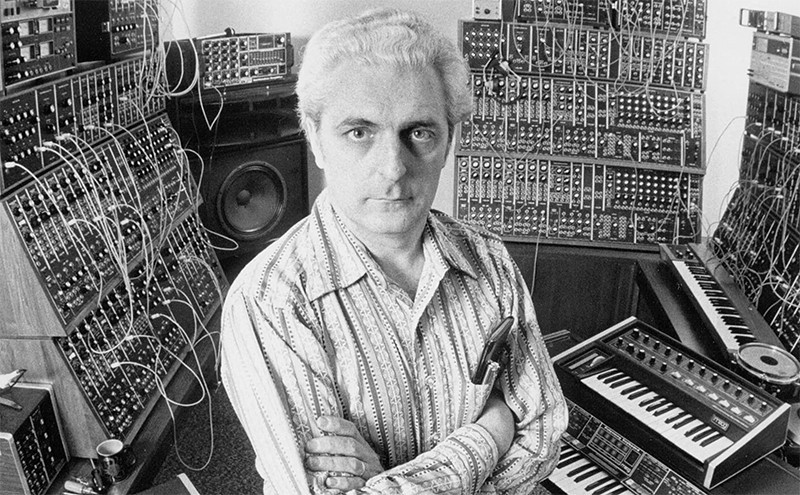

If you’re a music aficionado, or even just curious to peek on what’s going on either on music stages or inside music studios, you might have noticed, in the latest years, the appearance of bizarre creatures who look just like this one:

First of all, for those who did: don’t panic. Albeit very similar, and you probably saw a red wire and a blue one (or even, most probably, way more than just one), they are not bombs. They’re something way more interesting: modular synthesizers!

Most of you will probably already know what synthesizers are – those who don’t: a synthesizer is a music instrument (a keyboard, usually), who owes its name to the capacity of synthesizing sounds out of electronic, or virtual, means. In other words: whenever you hear something you can’t imagine being done by normal “real” instruments, it’s probably a synthesizer.

As example, here’s the best mainstream Metal keyboardist ever existed in this Solar system who speaks about some of his synthetic sounds:

As you can see, all of his equipment looks a lot like “just a keyboard” – albeit still being a synthesizer.

And here’s where things get interesting.

How the first modular synths were born.

Back in the days where synthesizers were first commercialized to the public, they weren’t sold ready made: you bought all the pieces, singularly, and then cobbled together what you wanted your synthesizer to be.

This, for many reasons – here’s a few:

- Early technology: bulky. The first synthesizers were fully analog – they worked with valves! Which made them extremely bulky.

- Early technology pt. 2: unclear purpose. Since it wasn’t many years they were on the market, people didn’t really figured out in full what is it that they wanted to do with them – so: the modular capacity left plenty of interpretation of what your synthesizer is gonna be.

- Early technology pt. 3: price. Being such an early technology, prices were still very high – so, one way to curb them, was to sell singular parts. So that you could buy what is it that you can afford.

You might have been noticing a pattern: none of these reasons are features – they’re all problems.

Which brings us to the next point:

Modular synthesizers are the cut-throat razors of synthesizers: quirky fun – but obsolete.

Once upon a time, they were the only solution. But now, they just don’t make sense – unless you want to just have something that looks pretty on your desk. Or you want to have a bit of a different experience once in a while – just like with cut-throat razors: they’re fun, and they do a very good job. But nobody would ever question how so handy and effective are modern electric razors.

Modern synthesizers look a lot more tame, but they have synthesis powers beyond even the wildest imagination of the times where modular synthesis was the only choice. To have a measure, think about all the things you can do with a modern computer – and, now, think of using said computer to create sounds.

Fun fact: he who killed modular synthesizers.

A great music instruments maker, one day, asked himself:

“What are the most common modular synthesizer pieces that people buy?”

The answer was: a few oscillators, a filter, an envelope generator, a keyboard.

From there, he thought:

“What if I just pack these things together, and sell them ready to be played? Rather than the usual DIY mess?”

And thus, this beauty was born:

The Moog Model D. Aka “Minimoog” (as opposed to its big brother, “The Moog” – a beast meant to be airlifted to the stage). One of the most important music instruments ever made. That, decades after its arrival, still shapes the form of modern synthesizers (3 osc, 1 filter, 1 env is still the standard for music synthesizers).

And the great music instrument maker was Robert Moog (1934 – 2005)

Once in a while, remember to thank him. For all the music it’s been done thanks to him.