The use of artificial intelligence in music (written by a musician)

I’m seeing more and more news about “use of AI in music”: let’s have a closer look at this topic – this time, with a musician around (me).

First things first: disambiguation about “What is an AI”

There’s 2 kinds of “artificial intelligence”: one true, one fake.

The “true” intelligence, the one with which you can have a nice chat and ask opinions about a movie, is called “strong AI” (https://www.investopedia.com/terms/s/strong-ai.asp). Think of this as the one from Star Trek’s Data and Star Wars’ C3PO.

The “fake” intelligence, the one we actually have, that you can see at work at its best in video games and parking gates, is called “weak AI” (https://www.investopedia.com/terms/w/weak-ai.asp). “Fake” because it actually isn’t an “intelligence” – as in: the capability for cognition and self awareness. It’s just a long set of predetermined “if X then Y”. It cannot create anything original. It cannot feel – nor is it supposed to: it’s called “intelligence” as a handy term for definition. Not because “actually intelligent”. Not a shock for anyone who ever played video games (who surely didn’t think of having a conversation with a Quake 2 end level boss, just because they had “artificial intelligence”).

With this out of the way, let’s go further.

How is music born?

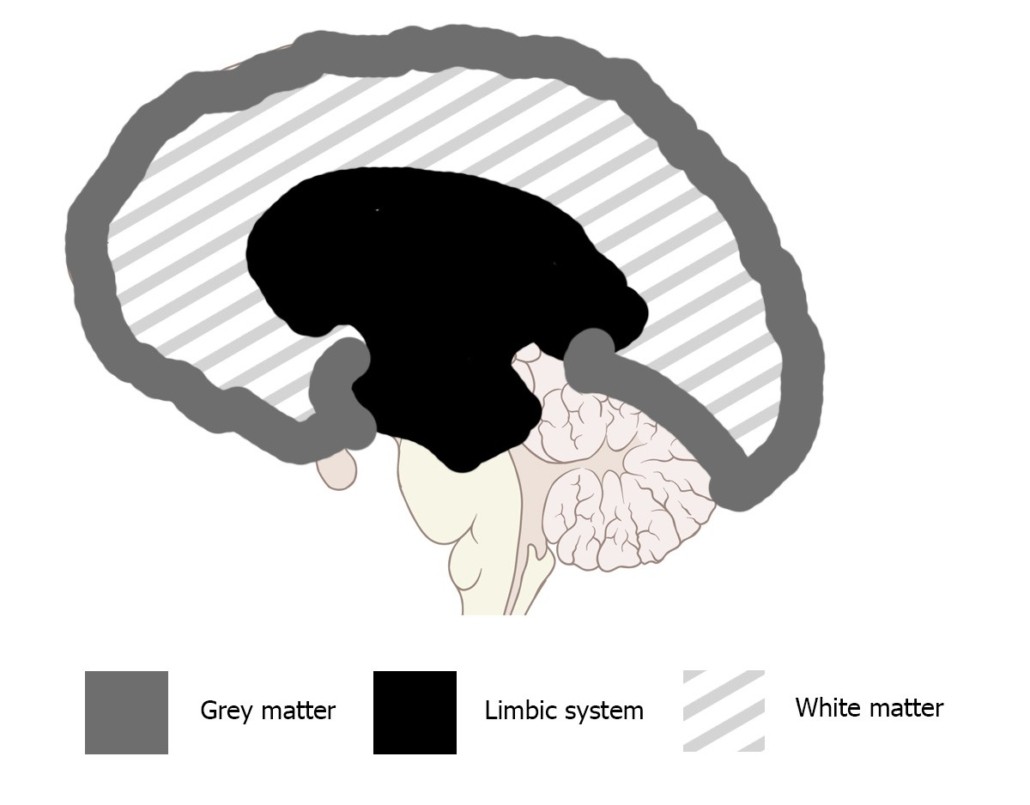

Music is born in the depths of our brain – the infamous limbic system. The one who creates emotions, and some of you might have heard called “reptilian brain”. After these emotions are born, we can then transform them into practical phenomena by interpolating them with our “rational side” – the grey matter. (And, by the way: this is the process we call “creativity”. Using our rational side to shape emotions into tangible creations)

As quick reference:

Grey matter = information processing (reason).

Limbic system = emotions (primal instincts).

In practical terms, for music: the limbic system “generates emotions”, the grey matter “rationalizes them into music”. These 2 systems are physically interconnected by the white matter. See picture for visual reference:

Music is, more or less, a physical sublimation of our emotions – which is, by the way, the definition of art.

No emotions = no music.

I guess you found your answer already

Until machines will be able to feel, they won’t be able to make music.

As of right now, our machines are able to pretend they feel. Just as much as you can make your car pretend to be happy by drawing a smile on its hood – but they actually cannot. Which is fine: people do just fine at being people

…or are they?

Why would you want to use AI to make music?

Now: this is an interesting question.

If people are perfectly able to do music, why would you want to use machines for it?

Very simple answer: not everyone trained enough to acquire the necessary talent to make music. So, they’re looking for ways to “cheat”: they hope to find tools to do the job they didn’t do – as untalented people usually do (even though for the “right” natural impulse: humans, deep inside their nature, are built for survival – no matter what. And if cheating is what they need to make it out alive, that’s what they’re gonna do. But, for civil living, it is necessary not to put ourselves into situations who will instigate us to harm others – like a pathological craving for a status symbol of “music maker”).

What weak AIs can actually be helpful for in music

If you’re a music software company, this section is for you:

there’s a lot of fields in which weak AIs can be used for, in music production.

For instance (but not limited to):

- MIDI humanization (a software to humanize MIDI patterns – seriously: why do we have to do this damn thing all by hand?? It’s 2020!)

- MIDI scrubbing (a software to recognize potential MIDI recording errors – again: it is pretty easy to see blatant errors, like a 1/128 note, and delete them automatically. Rather than having someone go through the track and do that by hand)

- MIDI drum arranger (a software you can use to build drum patterns according to your tastes – many times, drum patterns are repetitive. Yet, they require every single beat to be written by hand – because this software I’ve just mentioned doesn’t exist! Why can’t we have a software to which we can tell “8 crescendo hits on the snare drum, and then 2 hard hits, clockwise, starting from the 1st tom, on all toms”?)

- And so on…

I might write a separate post about this, because it’s a topic that really interests me

Leave a Reply

Want to join the discussion?Feel free to contribute!